Coding at its most fun is exploratory. It's exciting to try your hand at something new and see how it develops, choosing a route as you go along. Some poeple like to call this "expanding your ignorance", to convey that you cannot decide on things you don't know about, so first you have to become aware - and ignorant - of them. Then you can tackle them. If you want a buzzword for this I suppose you could call this "impulse driven development".

spiderfetch was driven completely by impulse. The original idea was to get rid of awkward, one-time grep/sed/awk parsing to extract urls from web pages. Then came the impulse "hey, it took so much work to get this working well, why not make it recursive at little added effort". And from there on countless more impulses happened, to the point that it would be a challenge to recreate the thought process from there to here.

spiderfetch was driven completely by impulse. The original idea was to get rid of awkward, one-time grep/sed/awk parsing to extract urls from web pages. Then came the impulse "hey, it took so much work to get this working well, why not make it recursive at little added effort". And from there on countless more impulses happened, to the point that it would be a challenge to recreate the thought process from there to here.

Eventually it landed on a 400 line ruby script that worked quite nicely, supported recipes to drive the spider and various other gimmicks. Because the process was completely driven by impulse, the code became increasingly dense and monolithic as more impulses were realized. And it got to the point where the code worked, but was pretty much a dead end from a development point of view. Generally speaking, the deeper you go into a project, gradually the lesser the ideas have to be to be realized without major changes.

Introducing the web

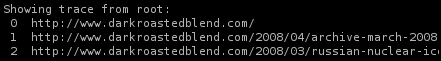

The most disruptive new impulse was that since we're spidering anyway, it might be fun to collect these urls in a graph and be able to do little queries on them. At the very least things like "what page did I find this url on" and "how did I get here from the root url" could be useful.

spiderfetch introduces the web, a local representation of the urls the spider has seen, either visited (spidered) or matched by any of the rules. Webs are stored, quite simply, in .web files. Technically speaking, the web is a graph of url nodes, with a hash table frontend for quick lookup and duplicate detection. Every node carries information about incoming urls (locations where this url was found) and outgoing urls (links to other documents), so the path from the root to any given url can be traced.

Detecting file types

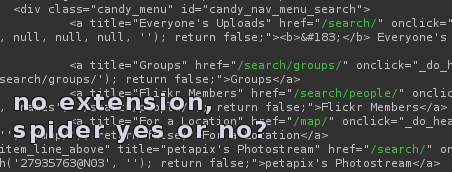

Aside from the web impulse, the single biggest flaw in spiderfetch was the lack of logic to deal with filetypes. Filetypes on the web work pretty much as well as they do on your local computer, which means if you rename a .jpg to a .gif, suddenly it's not a .jpg anymore. File extensions are a very weak form of metadata and largely useless. Just the same with spidering, if you find a url on a page you have no idea what it is. If it ends in .html then it's probably that, but it can also not have an extension at all. Or it can be misleading, which when taken to perverse lengths (eg. scripts like gallery), does away with .jpgs altogether and encodes everything as .php.

In other words, file extensions tell you nothing that you can actually trust. And that's a crucial distinction: what information do I have vs what can I trust. In Linux we deal with this using magic. The file command opens the file, reads a portion of it, and scans for well known content that would identify the file as a known type.

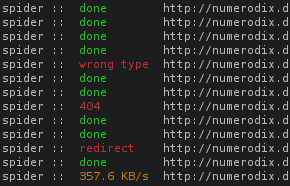

For a spider this is a big roadblock, because if you don't know what urls are actual html files that you want to spider, you have to pretty much download everything. Including potentially large files like videos that are a complete waste of time (and bandwidth). So spiderfetch brings the "magic" principle to spidering. We start a download and wait until we have enough of the file to check the type. If it's the wrong type, we abort. Right now we only detect html, but there is a potential for extending this with all the information the file command has (this would involve writing a parser for "magic" files, though).

A brand new fetcher

To make filetype detection work, we have to be able to do more than just start a download and wait until it's done. spiderfetch has a completely new fetcher in pure python (no more calling wget). The fetcher is actually the whole reason why the switch to python happened in the first place. I was looking through the ruby documentation in terms of what I needed from the library and I soon realized it wasn't cutting it. The http stuff was just too puny. I looked up the same topic in the python docs and immediately realized that it will support what I want to do. In retrospect, the python urllib/httplib library has covered me very well.

To make filetype detection work, we have to be able to do more than just start a download and wait until it's done. spiderfetch has a completely new fetcher in pure python (no more calling wget). The fetcher is actually the whole reason why the switch to python happened in the first place. I was looking through the ruby documentation in terms of what I needed from the library and I soon realized it wasn't cutting it. The http stuff was just too puny. I looked up the same topic in the python docs and immediately realized that it will support what I want to do. In retrospect, the python urllib/httplib library has covered me very well.

The fetcher has to do a lot of error handling on all the various conditions that can occur, which means it also has a much deeper awareness of the possible errors. It's very useful to know whether a fetch failed on 404 or a dns error. The python library also makes it easy to customize what happens on the various http status codes.

A modular approach

The present python code is a far cry from the abandoned ruby codebase. For starters, it's three times larger. Python may be a little more verbose than ruby, but the increase is due to a new modularity and most of all, new features. While the ruby code had eventually evolved into one big chunk of code, the python codebase is a number of modules, each of which can be extended quite easily. The spider and fetcher can both be used on their own, there is the new web module to deal with webs, and there is spiderfetch itself. dumpstream has also been rewritten from shellscript to python and has become more reliable.

Grab it from github:

June 28th, 2008

June 28th, 2008