Graphs, they are fun. You can use them for all sorts of things, like drawing a picture of the internet (or your favorite regular expression!)

Graphs, they are fun. You can use them for all sorts of things, like drawing a picture of the internet (or your favorite regular expression!)

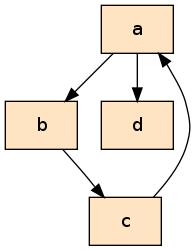

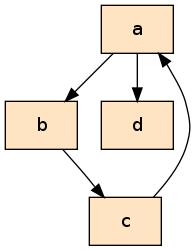

For example, look at that handsome guy there on the right. Is that a gorgeous graph, or what? (Incidentally, if you aren't yet a fan of graphviz, it's time to become one, it's superb!)

Now take a gander at the code snippet below, that's the python representation of it.

class Node(object):

def __init__(self, name):

self.name = name

a, b, c, d = Node('a'), Node('b'), Node('c'), Node('d')

a.refs = [b, d]

b.refs = [c]

c.refs = [a]

d.refs = []

But it's not there just to be stared at, so let's do something with it! One thing we could do is go over the graph and print out the nodes. But let's do one better, let's also show how deep into the graph we are by indenting the output! What we want is this:

We don't have the nice arrows here, but you can still make out the shape of the graph.

First iteration

def traverse_naive(node, depth):

print('__'*depth + node.name)

for ref in node.refs:

traverse_naive(ref, depth+1)

Very simple. Print the name of the node at the current level of depth, and then recurse down the outgoing edges. But when we run this something bad happens:

>>> traverse_naive(a, 0)

a

__b

____c

______a

________b

..

RuntimeError: maximum recursion depth exceeded

There's no sign of d, instead we keep going round and round along the path a-b-c-a. Woops.

Preempting cycles

Traditionally, graph traversal algorithms have set properties on nodes in the graph to indicate to themselves that a particular node had been seen before. For example, we could set node.been_here_before = True every time we enter a node, and then we could check for this property to make sure we don't re-enter the node later.

But this is not awesome, because we then have to change the graph as we traverse it. What if we want to traverse it again later, do we then need another traversal algorithm to remove all the markers or what?

There is another way to do this, however. We can use a data structure completely outside the graph in which we keep track of what we've seen so far (imagine a guy walking around a warehouse with a clipboard, he then doesn't have to mark any of the merchandise!).

So instead of checking the value of node.been_here_before, we're going to check the value of cache[node].

Now, there are two main strategies for where to do this check. We can do it right before the recursive call, or right after.

def traverse_check_before(node, depth, cache):

cache[node] = None

print('__'*depth + node.name)

for ref in node.refs:

# we are about to recurse, first check if the node we want

# to recurse on is in the cache

if ref in cache:

print('Already in cache: %s' % ref.name)

continue

# recurse if we reach this point

traverse_cached(ref, depth+1, cache)

def traverse_check_after(node, depth, cache):

# we have just recursed, return if this node is already in

# the cache

if node in cache:

print('Already in cache: %s' % node.name)

return

cache[node] = None

print('__'*depth + node.name)

for ref in node.refs:

# recurse without exception

traverse_cached(ref, depth+1, cache)

Here it doesn't matter which one we use, so we're just going to use the second option.

Second iteration

At this point you might be thinking that everything is going swimmingly, but in fact a small problem has crept up. It cannot have escaped your attention that we've snuck another parameter into that function definition. This means that we have to call the function like this:

>>> traverse_check_after(a, 0, {})

a

__b

____c

Already in cache: a

__d

The function works, but we really, really don't want to have to do it like this. There is no reason the caller has to know about the cache, let alone that it is a dictionary. And if we ever decide to change the cache mechanism, we have to update all the client code.

So what can we do? We can't set up the cache in the function body, because the function is recursive!

This is where you might get that look in your eye that you get when you're being clever. Hah, but what about this:

def traverse_cached(node, depth, cache={}):

if node in cache:

print('Already in cache: %s' % node.name)

return

cache[node] = None

print('__'*depth + node.name)

for ref in node.refs:

traverse_cached(ref, depth+1, cache=cache)

Brilliant! We don't have to set up the cache neither inside the function body nor at the call site. This cache has to be initialized somewhere between the call and the function being executed, but somehow we've found a magical in-between, haven't we!

Keyword parameters really are a tremendous boon, but unfortunately they will not save our skin this time. Do you know what happens if we do this?

>>> traverse_cached(a, 0)

>>> traverse_cached(a, 0)

Read and weep:

>>> traverse_cached(a, 0)

a

__b

____c

Already in cache: a

__d

>>> traverse_cached(a, 0)

Already in cache: a

Keyword parameters

Keyword parameters don't do what you thought they did. You thought traverse_cached would get a new dictionary every time it was called without a cache argument. But that's not what happens. (But isn't python magical???)

In fact, it works like this. The cache keyword parameter gets initialized once and for all when the function is compiled. Since the value it is set to is an empty dictionary, the dict object is initially empty. But every time you call the function, it's the same dictionary that gets passed in! :eek:

Third iteration

It's come to this, I'm afraid. The problem is unsurmountable. We can't not pass the cache parameter. But we definitely don't want to do it at the call site. Which leaves no other option than...

def traverse_cached_fixed(node, depth):

def rec(node, depth, cache):

if node in cache:

print('Already in cache: %s' % node.name)

return

cache[node] = None

print('__'*depth + node.name)

for ref in node.refs:

rec(ref, depth+1, cache)

rec(node, depth, {})

I really hate using an inner function just for this. It makes it messier, you have to route the arguments through an extra function call. But that's the price you pay, I'm afraid.

Postscript

By now you must be fuming at the fact that I've come all this way while pretending that the function call didn't have another parameter that was completely pointless and indeed deserving of the very same criticism.

Well, the difference is that you can set depth=0 as a keyword parameter in the function definition, so that you don't have to pass it. And this doesn't break anything. But why??

The answer is found when poking around python. A function is in fact a mutable object. Have a look:

def f(a=0):

a = 1

>>> print f.func_defaults

(0,)

func_defaults is the tuple that stores the values of the keyword parameters which have a default value. The value we see here is the integer 0, and it will not change no matter how many times we execute the function.

But collection types (and your own types too) are different:

def g(a=[]):

a.append(1)

print a

>>> print g.func_defaults

([],)

>>> g()

[1]

>>> print g.func_defaults

([1],)

>>> g()

[1, 1]

Despite different, it actually works the same way (sorta). The binding of the keyword default is constant. But with an integer, the parameter is bound to the constant 0, whereas with "heavier" objects, it is bound to the object, but the object's inner properties can very well mutate!!!

March 21st, 2010

March 21st, 2010

March 12th, 2010

March 12th, 2010

Graphs, they are fun. You can use them for all sorts of things, like drawing a picture of the internet (or your favorite regular expression!)

Graphs, they are fun. You can use them for all sorts of things, like drawing a picture of the internet (or your favorite regular expression!)